Nine Californians will soon sort truth from theater in a case that asks whether Sam Altman can be trusted to steer OpenAI at the very moment artificial intelligence is shaping markets, policy, and public expectations with unprecedented speed and force. The suit, filed by Elon Musk, takes a founder’s quarrel and turns it into a referendum on leadership integrity, corporate design, and the uneasy truce between mission and money. At issue is more than who promised what; it is whether a lab that began with nonprofit ideals can responsibly leverage for-profit tools, heavyweight partners, and relentless growth without losing its soul. With an IPO expected before year-end and rival platforms in hot pursuit, the verdict will land into a climate already primed to judge technology leaders not only by results, but by the structures they build to contain their own power.

Origins and Founding Vision

OpenAI’s story began with a provocation: build a nonprofit counterweight to DeepMind that would pursue safe AI in the public interest and keep frontier research from being locked inside one corporate fief. The founders—Elon Musk, Sam Altman, and Greg Brockman—cast the project as mission-first, committed to openness where feasible and guarded where safety demanded it. Musk contributed roughly $40 million, pressed for the “OpenAI” brand, and helped recruit Ilya Sutskever, cementing his role as financier, evangelist, and talent magnet. Those early commitments established a strong moral narrative: resource the lab generously, publish ambitiously when safe, and treat safety research as a public good rather than a moat.

Building on this foundation proved harder than the talking points suggested. As model training ballooned in cost and complexity, the founders confronted a structural riddle: how to fund compute-intensive research without surrendering mission. A nonprofit alone could not bankroll frontier-scale clusters or long-horizon bets, yet any for-profit arm risked warping incentives. Emails and strategy notes from the early years reveal debates over governance, publication norms, and partner selection, with Microsoft and Amazon both considered. The founders’ shared fear of concentrated AI power—initially aimed at DeepMind—shadowed their own choices, foreshadowing later conflict about who would hold the reins and what “openness” meant when safety and competition collided.

Mission, Money, and the Microsoft Question

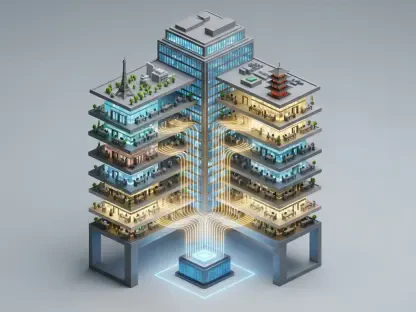

The lawsuit turns that strategic dilemma into a personal indictment. Musk argues Altman and Brockman diverted a nonprofit into a “for-profit, market-paralyzing gorgon,” binding OpenAI too tightly to Microsoft and straying from the public-benefit promise. He claims his financial support and brand equity were leveraged to build a structure that ultimately cut him out while courting a single dominant partner. OpenAI counters that Musk backed the for-profit concept early to secure the massive compute required for training, then departed after seeking control others would not grant. The company points to hybrid governance as a practical tool: a nonprofit in charge of a mission-locked, capital-raising entity, with guardrails designed to preserve purpose while scaling.

Discovery has muddied absolutist claims. Emails suggest Musk previously favored Microsoft over Amazon as the preferred cloud backer, undercutting the notion that the alliance emerged as a stealth pivot. Internal notes attributed to Brockman capture ethical anxiety about changing the corporate chassis without Musk’s blessing, alongside candid reflections on power dynamics and personal incentives. Meanwhile, doubts about Altman’s candor—surfacing in a brief 2023 board ouster, accusations by the first chief scientist, and Musk’s “swindler” taunt—linger as context rather than conclusion. Altman has framed the courtroom as a cleansing arena, asserting that sworn testimony and contemporaneous records can sort misremembered conversations from deliberate misdirection.

How the Trial Will Unfold

The mechanics are straightforward, the implications less so. A jury will decide liability on Musk’s claims against Altman, Brockman, and OpenAI, while a judge would later weigh remedies if the verdict goes his way. The witness slate reads like a governance syllabus: Shivon Zilis and Jared Birchall to explain Musk’s role and financing; Ilya Sutskever to revisit the lab’s formative period; Bret Taylor to outline the current board and oversight model; Satya Nadella and Kevin Scott to detail Microsoft’s collaboration and boundaries; and Mira Murati to recount the 2023 leadership crisis. Their testimonies promise texture on how decisions were actually made, who set the guardrails, and when lines between mission, money, and market leverage were crossed—or prudently respected.

Musk’s own business repositioning will hover over the case. Since filing suit, he consolidated xAI with X under SpaceX, which has filed for an IPO, a configuration that could channel capital and data streams into his AI push while he litigates against OpenAI. Defense counsel will argue that this makes the suit strategic as much as principled. On remedies, Musk asks the court to unwind structural changes, remove Altman and Brockman, and force disgorgement to realign the enterprise with its nonprofit origin. OpenAI will counter that its recap into a public benefit corporation—while the nonprofit retains control—embeds mission in the charter, not merely the marketing, and that diversified deals beyond Microsoft signal independence rather than capture.

The Stakes and Next Steps

The verdict will reverberate beyond two founders. A finding for Altman would legitimize hybrid governance as a credible means to finance frontier AI through capped returns and mission-anchored oversight, even when a single partner like Microsoft remains central for compute and product distribution. A finding for Musk could catalyze deeper reforms: narrower IP-sharing terms with platforms, stricter board independence, and clearer triggers for leadership changes when candor wavers. Either path will inform how labs structure rights to safety research and who can veto deployments of high-stakes systems. Media framing has cast the conflict as a battle for a corporate soul; the jury’s narrower ruling will still shape how the industry calibrates disclosure, alignment, and power.

To convert spectacle into progress, several steps had been within reach of leaders, investors, and regulators. Boards could have formalized disclosure protocols for high-risk model releases, with independent red teams reporting directly to directors. Partnerships might have been time-boxed with mandatory competition reviews and sunset clauses on exclusivity. Charters could have specified “break-glass” controls—temporary throttles on API scale or feature rollouts—tied to well-defined safety thresholds. Investors might have demanded audit rights over safety spending and compute usage. And regulators could have established transparent safe-harbor rules for sharing pre-deployment risk findings. Pursuing these measures would have translated a courtroom drama into firmer guardrails for trustworthy AI leadership.