Desiree Sainthrope is a distinguished legal expert whose career has been defined by the intricate work of drafting trade agreements and navigating the complexities of global compliance. With a deep focus on intellectual property and the transformative power of emerging technologies, she has become a leading voice in how law firms adapt to digital disruption. Her perspective blends traditional legal rigor with a forward-looking approach to the tools that are reshaping the industry’s landscape.

This conversation explores the strategic evaluation of artificial intelligence within highly regulated environments, examining how organizations distinguish between competing large language models. We delve into the methodologies for benchmarking public versus legal-specific tools, the internal collaboration required to build custom assessment frameworks, and practical advice for firms looking to initiate their own AI testing protocols.

Professionals often alternate between different large language models to fact-check outputs and identify specific weaknesses. When using one engine to critique another, what specific criteria determine which model is most reliable for a particular task, and how do you document these evolving performance gaps over time?

The reliability of a model often reveals itself through a process of cross-examination where we treat the outputs of one engine as raw material for another. For instance, if I receive a complex analysis from Gemini, I will immediately feed that document into Claude or ChatGPT with the explicit instruction to find every inaccuracy or logical fallacy within it. This iterative loop allows us to see exactly where a model like Gemini might hallucinate or where a tool like Claude might excel in nuanced reasoning. Over time, you begin to recognize distinct patterns, such as one model being superior for deep research while another is more adept at structural critique. Documenting these gaps requires a consistent feedback loop where we note these strengths and weaknesses against specific categories of work to ensure we are always using the most effective “second pair of eyes.”

With vendor requests increasing and products often sharing similar data sets, marketing claims can become indistinguishable. What specific questions should an organization ask to uncover a vendor’s unique value proposition, and what red flags suggest a tool lacks true differentiation from its competitors?

In an environment where I might receive three or more vendor requests every single week, the sheer volume of “similar” offerings becomes a significant hurdle. The most critical question we now lead with is: “What specifically differentiates you from every other company out there?” It is a red flag when a vendor relies on generic marketing language or cannot explain how their underlying data or processing logic varies from the standard models we already use. Many tools start looking exactly alike because they are built on the same foundation, so if a vendor cannot articulate a unique value proposition, it suggests their tool lacks true innovation. We look for a clear, technical explanation of their data edge or a specific functional advantage that justifies adding yet another platform to our tech stack.

Public AI tools often excel at general research, while legal-specific platforms show more precision with case law and statutory judgments. How should a firm structure a benchmarking study to measure these trade-offs, and what metrics are most useful for evaluating accuracy, citations, and structural completeness?

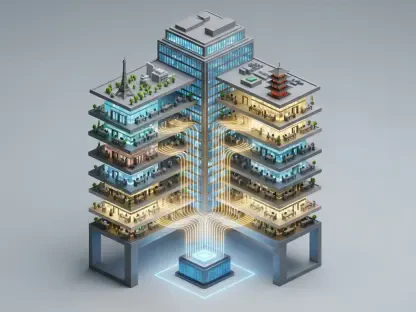

Structuring a successful benchmarking study involves testing a diverse range of tools, from public options like Perplexity and OpenAI to legal-specific giants like Harvey, LexisNexis, and Thomson Reuters. We have found that the best approach is to run a variety of queries across different legal domains, evaluating the results based on five core pillars: accuracy, citations, style, structure, and completeness. Our research shows a clear divide where public tools perform exceptionally well on general research questions where knowledge is widely available, whereas legal-specific tools are far more precise when dealing with statutory judgments and case law. By capturing these metrics side-by-side, we can visualize the trade-offs and ensure that our attorneys understand which tool is appropriate for a specific legal task.

Highly regulated organizations are increasingly collaborating with internal IT teams to build custom benchmarking tools for ongoing evaluation. What are the primary technical challenges in developing these proprietary systems, and how do you ensure the rubric stays relevant as models update their underlying capabilities?

The shift toward building proprietary benchmarking tools stems from the fact that our requirements are becoming increasingly bespoke and the legal landscape is constantly shifting. One of the primary technical challenges is ensuring that the tool can interface effectively with various LLMs while maintaining the security standards required by a highly regulated firm. We work closely with our IT teams to develop these internal systems so we can conduct evaluations on an ongoing basis rather than as a one-off project. To keep the rubric relevant, we treat it as a living document that we update as models release new versions, ensuring that our performance metrics reflect the current state of the technology. This constant refinement allows us to maintain a high standard of precision that generic, external benchmarks simply cannot provide.

Implementing a full-scale AI evaluation can be daunting due to the significant time commitment required. If an organization wants to start small by testing a limited set of questions against a few tools, what step-by-step process ensures the results are scalable and provide a clear understanding of performance?

The key to overcoming the “blank page” problem in AI evaluation is to start with a manageable scope that can be replicated as you grow. I recommend starting small by selecting a single category of legal work and pulling 20 representative questions from your existing research or knowledge base. Next, select a few AI tools and run those questions through them, ranking the answers according to a consistent set of criteria like accuracy or citation quality. By repeating this process a couple of times, you establish a reliable baseline and a rubric that can be applied to other areas of law later on. This consistent, incremental approach provides a clear understanding of tool performance without the exhaustion of a firm-wide rollout, making the data much more actionable for decision-makers.

What is your forecast for AI evaluation in the legal industry?

I believe we are moving toward a future where “evaluation” is no longer a separate phase of software procurement, but a continuous, automated feature of the legal workflow. We will likely see firms move away from manual benchmarking and toward sophisticated, internal AI-driven dashboards that monitor the accuracy and reliability of every model in real-time. As the market becomes even more crowded, the ability to ruthlessly audit these tools will be the primary differentiator between firms that achieve true efficiency and those that merely accumulate expensive subscriptions. Ultimately, the winners will be the organizations that stop treating AI as a “black box” and start treating it as a performance-managed asset that must prove its value every single day.