Desiree Sainthrope is a formidable voice in the legal technology landscape, bringing years of expertise in drafting trade agreements and navigating the labyrinth of global compliance. Her deep understanding of intellectual property and the shifting tides of artificial intelligence makes her a crucial guide for those trying to make sense of the current consolidation wave in the industry. As the legal field moves away from static databases toward dynamic, reasoning-capable systems, her insights provide a roadmap for how technology is fundamentally rewriting the rules of practice.

Legora recently integrated both Walter AI and Qura into its operations. How does a strategy of rapid, specialized acquisitions help a company build a comprehensive legal AI stack, and what are the specific technical benefits of merging niche, AI-native infrastructures into a single platform?

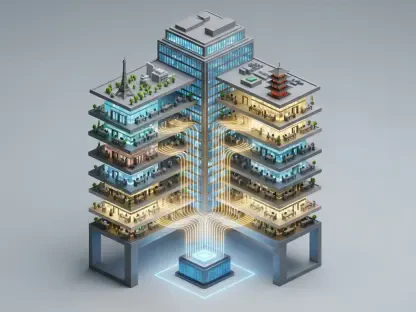

The momentum Legora has generated following its $550 million Series D funding round is a clear signal that the race to build a definitive legal AI stack is won through strategic depth, not just raw speed. By acquiring specialized players like Walter AI and Qura, a company can bypass the years of trial and error required to build “AI-native” foundations from scratch. This strategy allows a $5.55 billion entity to stitch together highly refined tools that were built from the ground up to be navigated by large language models, rather than trying to retrofit old software with modern plugins. Technically, this integration means the platform can handle everything from the initial communication with individual courts to the final parsing of complex precedents within a unified environment. It creates a seamless flow where the “legal reasoning” capabilities of one acquisition bolster the research infrastructure of another, resulting in a powerhouse that feels intuitive to the practitioner rather than a disjointed collection of tools.

Using large language models to reason over current law represents a shift from traditional keyword search. How does applying legal reasoning to data annotation change the quality of insights for practitioners, and what are the primary challenges when using LLMs to parse complex precedents across different jurisdictions?

The shift from a “glorified search engine” to a system that can actually reason over current law is like moving from a simple index to a living, breathing legal assistant. When we apply legal reasoning to data annotation, we aren’t just tagging a document with a date and a title; we are allowing the AI to identify the underlying authority and the weight of a precedent in real-time. This level of precision was previously impossible, but now it allows a lawyer to see the “why” behind a search result, which saves hours of manual verification and deepens the quality of their strategy. However, the friction arises when you try to harmonize these models across various jurisdictions, as the linguistic nuances and procedural differences between, say, Swedish law and American law are immense. The challenge lies in ensuring the AI doesn’t just translate words, but truly understands the specific legal logic of 27 different jurisdictions to provide accurate, high-stakes advice.

Moving from regional markets like Sweden and the EU to the United States requires significant scaling. What specific steps are necessary to adapt a legal research database for the American market, and how do network effects impact the functionality of a central operating system for cross-border legal work?

Scaling into the United States is a massive undertaking that requires more than just adding new documents; it demands a total reconfiguration of how the AI understands the vast, multi-layered American judicial system. To make this work, the engineering team must embed their specialized research capabilities into a broader platform that can handle the sheer volume of U.S. case law while maintaining the “network effects” that make cross-border data valuable. When legal workflows are orchestrated in one central operating system, every piece of data from a competition law case in the EU adds a layer of intelligence that can potentially inform a similar strategy in the States. There is a tangible sense of momentum when these workflows are unified, as it allows global firms to maintain a single “source of truth” regardless of where their practitioners are located. It’s about building a gravity well of data where the more information the system processes, the more indispensable it becomes to the global legal market.

Large funding rounds, such as those exceeding $500 million, are currently fueling an acquisition boom in the legal tech sector. How is this massive influx of capital shifting the competitive balance between startups and legacy providers, and what unique value do small, specialized engineering teams bring to multi-billion dollar organizations?

The sheer volume of capital—like the half-billion dollars Legora secured—is creating a wider chasm between the agile, AI-first startups and the legacy providers who are often tethered to aging, clunky infrastructures. While legacy firms have the advantage of history, these massive funding rounds allow newcomers to simply buy the best talent and technology on the market, effectively leapfrogging decades of traditional development. A small, elite team of roughly 10 engineers, like the group from Qura, brings a level of specialized intensity and “AI-native” thinking that is often stifled in larger, more bureaucratic organizations. These teams are the “special forces” of legal tech, providing the technical breakthroughs in document tagging and precedent parsing that can be scaled across a multi-billion dollar user base. It is a fascinating dynamic where the financial muscle of a giant is used to protect and amplify the innovative spark of a handful of brilliant minds from places like the KTH Royal Institute of Technology or Università Bocconi.

What is your forecast for the legal AI industry?

I anticipate that the industry is heading toward a period of “hyper-consolidation” where the distinction between legal research, document drafting, and case management completely disappears. Within the next few years, we will see the emergence of a “central operating system for law” that doesn’t just assist with tasks but actively predicts jurisdictional risks and suggests litigation strategies across dozens of borders simultaneously. The legacy providers who fail to move beyond keyword-based search will likely be absorbed or rendered obsolete by these well-funded, AI-native platforms that can reason over current law with the speed of a machine and the nuance of a senior partner. We are essentially witnessing the birth of a new standard for professional excellence where the “human in the loop” is empowered by an incredibly sophisticated, reasoning-capable engine that handles the heavy lifting of data analysis.